Pricing AI for Distribution: A Practitioner’s Playbook

Lessons from the field on using pricing to drive adoption, build sticky habits, and create an unfair advantage in AI markets

Lessons from the field on using pricing to drive adoption, build sticky habits, and create an unfair advantage in AI markets

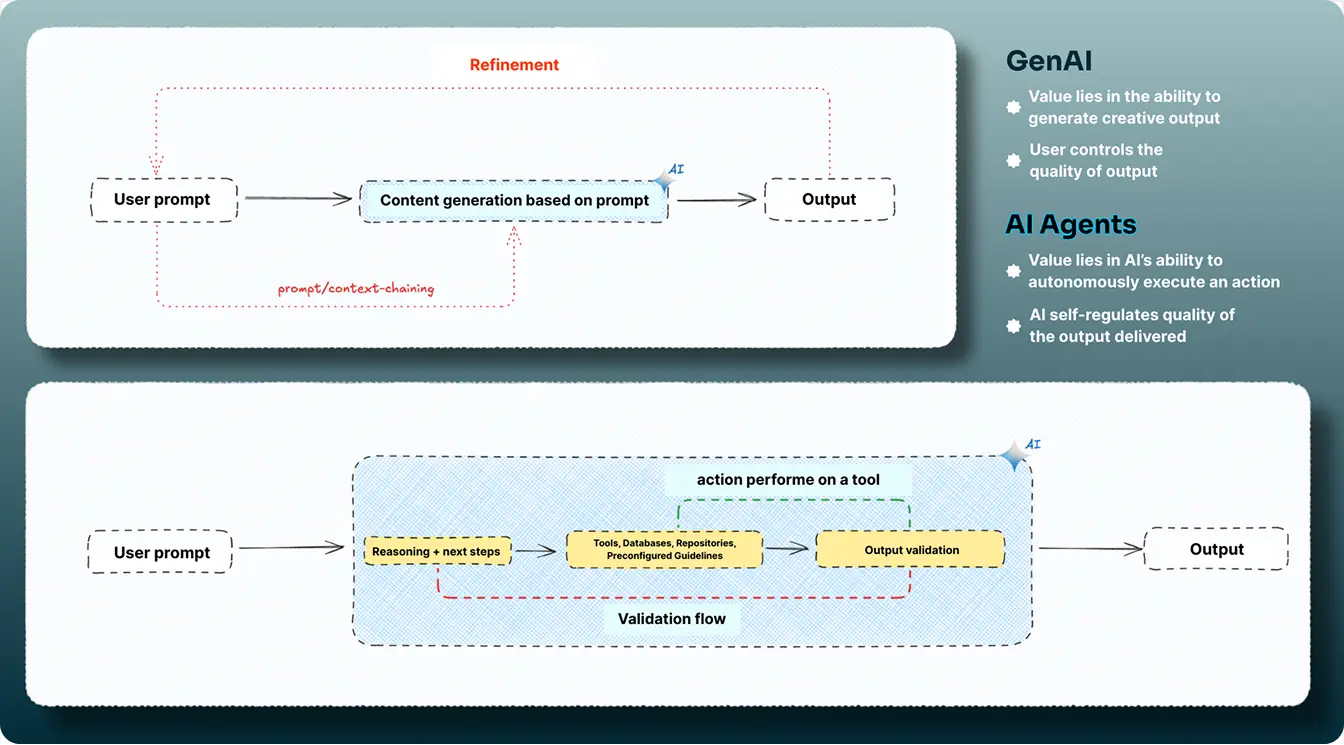

AI’s biggest power is capability arbitrage: chain autonomous reasoning and workflow orchestration with your product, and it fans out into adjacent jobs without requiring heavy rebuilds. Suddenly, no adjacent task is undoable, no competitor is untouchable, and no moat is permanent.

When capabilities converge quickly, and everyone’s product starts to look similar on the surface, the real differentiator becomes distribution and adoption. Businesses that achieve better adoption early on can drive product enhancements through usage-driven feedback and form resilient habits, making it much harder for users to switch to a competitor.

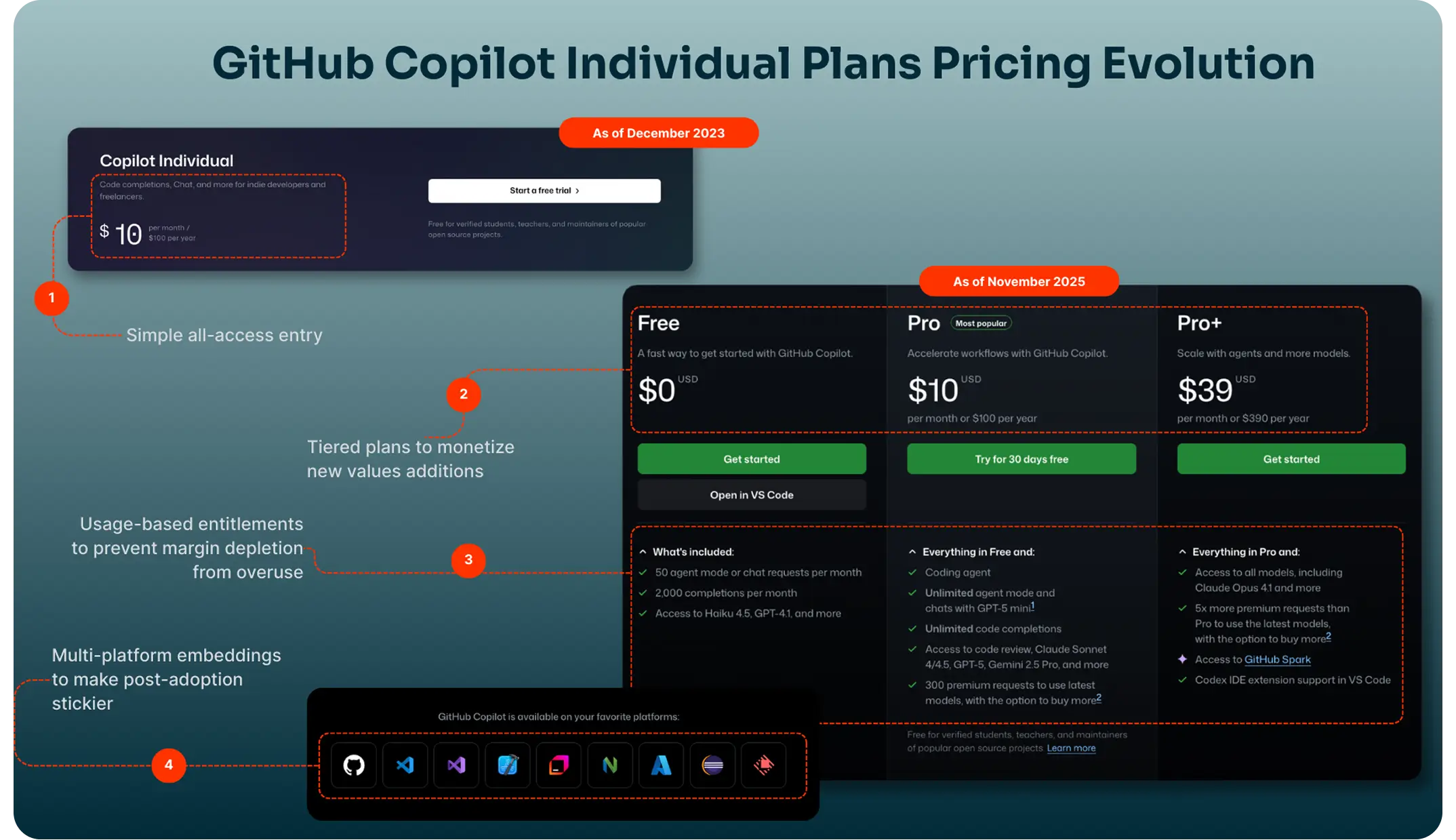

When GitHub launched Copilot, it transitioned from hosting repositories to a comprehensive coding agent. Today, it leads the coding agents market while competing with the likes of Cursor, Replit, Cognition, and Lovable.

Much of Copilot’s traction stemmed from using monetization as a lever for adoption. GitHub set an all-inclusive $10/month entry price for individuals to seed habit and distribution, then allowed price and controls to scale as capabilities (and variable AI costs to deliver said capabilities) expanded.

But here’s the paradox: in AI, growth burns cash. By the end of 2023, reports surfaced that GitHub was actively burning $20 for every Pro customer on its Copilot Individual plan. In other words, AI monetization should work twice as hard to balance product adoption without sacrificing sustainability.

To understand how companies are navigating this balance, we spoke with some of the most experienced pricing and monetization leaders across the AI and SaaS ecosystem to unpack how pricing is one of the most potent growth levers of the AI age.

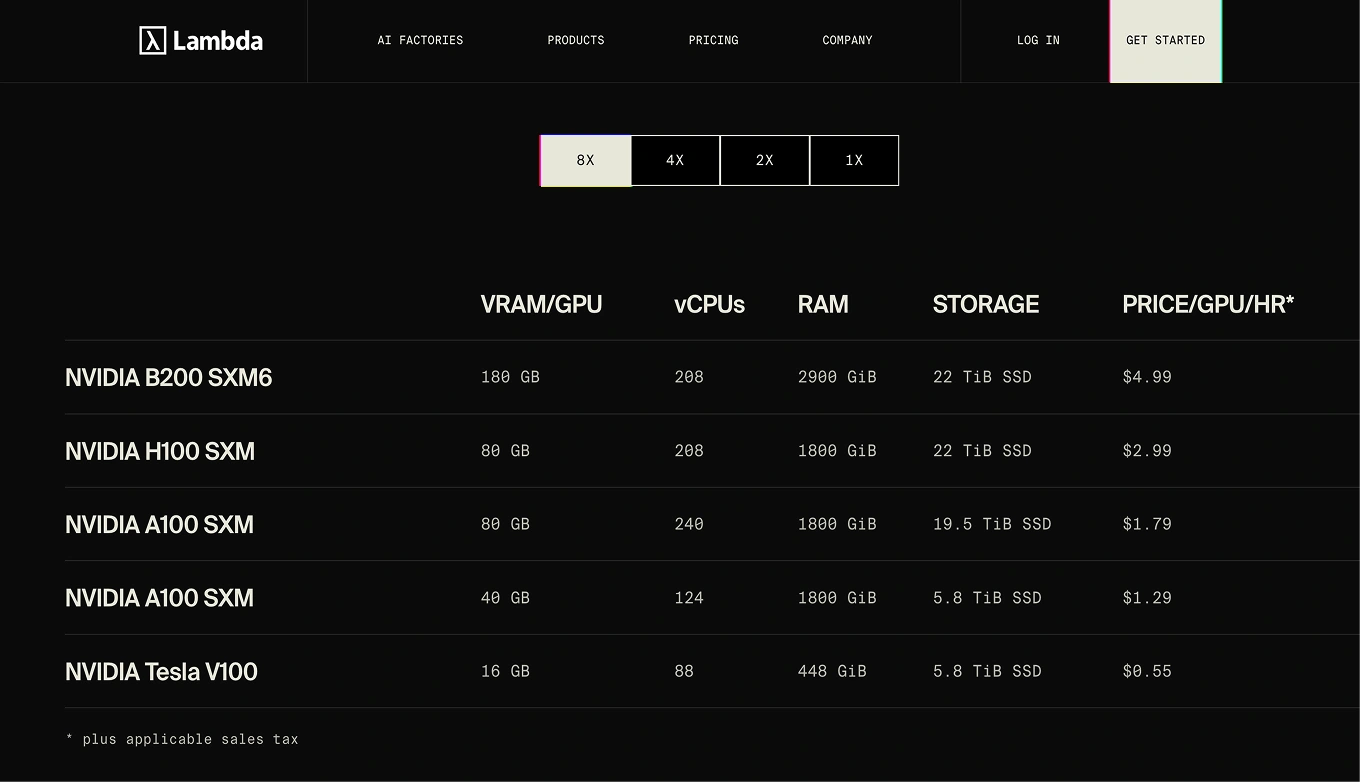

If there is one thing pricing leaders universally agree on, it is this: pricing an AI product is nothing like pricing SaaS. Adoption curves, cost structures, and customer behavior all look different. In SaaS, marginal cost trends toward zero. In AI, every call, workflow, or retrieval carries variable costs that scale with model size, context depth, and data quality.

This makes pricing and monetization tougher. You’re not just pricing for value. You’re pricing for exploration, unpredictability, and unit economics at the same time.

- Brian Balfour, CEO, Reforge

Hidden within Brian’s observations are all the patterns we are observing in the market with AI businesses monetizing their solution:

In AI, the temptation to monetize early is strong. Usage is spiky, and every additional inference burns a little more cash. However, experts argue that early lock-ins matter more—sometimes even at the cost of subsidized pricing—than myopic monetization.

- Tomasz Tunguz, General Partner, Theory Ventures

AI markets don’t reward the most polished product; they reward the product that becomes a habit first. This happens because of the following reasons:

Essentially, with AI, distribution moats compound faster than feature moats. Because once a user has tuned prompts, built workflows, integrated your agent, or simply developed muscle memory, switching doesn’t feel like trying something new. It feels like paying the cognitive tax twice. This is best explained by one of Tomasz Tunguz’s recent essays:

“As AI capabilities start to converge in performance, switching costs increase, which means customers are likely not to switch over from their existing vendor:

By Monday lunch, I had burned through my Claude code credits. I’d been warned; damn the budget. Now two days on, I’ve needed to figure out alternatives… Switching between tools incurs costs. The tools, the workflow, the prompts that I’ve optimized for Claude code must all be ported (at my expense!) to other tools.

As the capabilities of these models begin to plateau, the costs to shift increase. So does my willingness to pay for Claude to answer me.”

This is why many early AI products quietly subsidize their first wave of customers:

The rationale is simple:

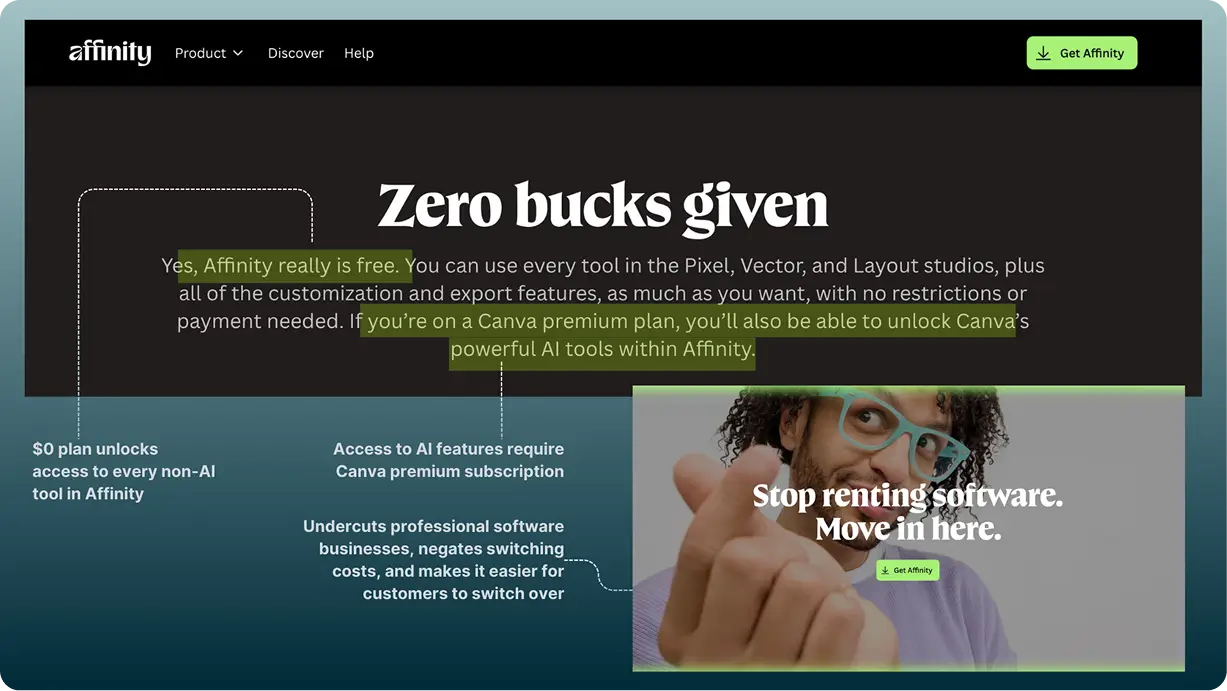

“When more pros use Affinity, more teams end up using Canva. Pro designers lead the visual workflow. If they build in Affinity, they're more likely to scale in Canva. And when they do, marketing, sales, and the rest of the business will follow. That is how it grows.”

Cameron Adams, Co-founder and Chief Product Officer, Canva

With the line between traditional SaaS companies and AI-native businesses blurring, AI usage may still resemble traditional SaaS demand curves, but it behaves more like cloud infrastructure cost curves.

As users become more comfortable, they don’t just use your product more; they also become more engaged. They push its edges. They chain calls. They embed it in workflows. They ask it to do things it wasn’t originally designed for. Every jump in value delivered also becomes a jump in cost incurred.

At SaaStr Annual 2025, fal’s co-founder Gorkem Yurtseven summarized the entire predicament in one sentence:

“No one in AI really has 80–90% gross margins… The cost to serve each customer is real. Everyone has less margin — but they’re growing like crazy.”

Every AI interaction—a call, a retrieval, a multi-step chain, a 200K-token prompt—carries a real, variable cost. And the more your users adopt your product, the faster your cloud bill starts acting like a runaway freight train.

But here’s the real problem: you can’t build a sustainable AI business without good margins, but you can’t build a category-leading AI business if you over-optimize margins too early.

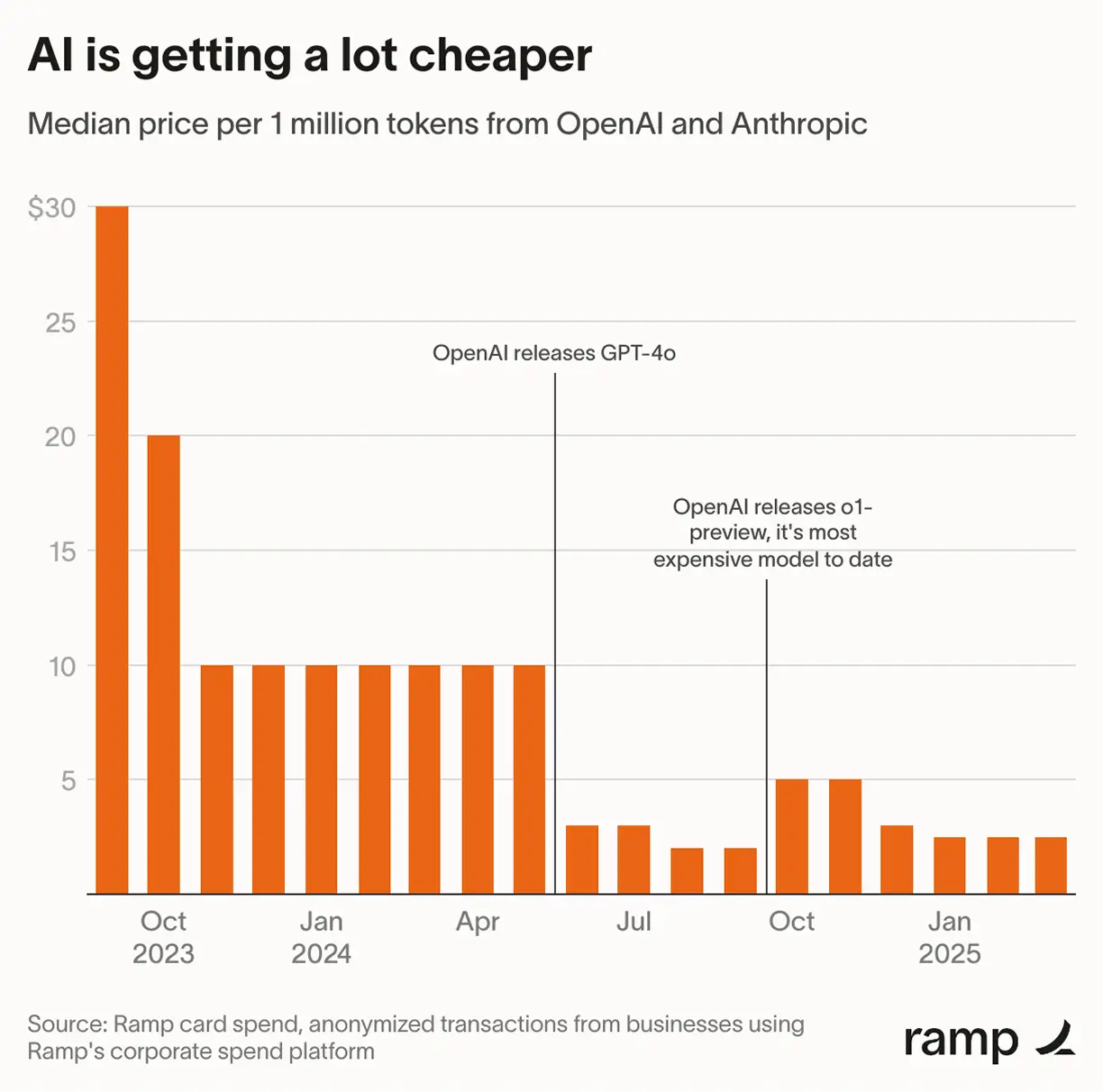

Every time they wanted to optimize pricing based on their costs, the builders at Wispr Flow landed on a different problem: that model and inference costs were also becoming more effective.

Data from Ramp shows that model costs for OpenAI fell by 75% in a year, from March 2024, even as models themselves improved:

Even then, legacy data is no proof that model costs will steadily decline. And unlike Wispr Flow, which trains and serves its own models, most businesses that rely on labs (like OpenAI and Anthropic) don’t have the same 90% margins.

Many businesses operate at significantly lower margins, while others, such as Cursor and Replit, may actively be losing money.

“Once heavy and power users represent more than 10-15% of your user base, per-seat pricing becomes unsustainable. This creates what economists call “adverse selection at scale”—the users who extract the most value are also the ones who make the business unprofitable.”

Jason Lemkin, Founder, SaaStr

SaaS gave the world predictable consumption. AI gave unpredictable costs. Your pricing needs to account for both.

Yet, one of the strangest dynamics in AI monetization is that customers don’t always know what “fair” looks like. But they always know when something “feels” unfair.

In traditional SaaS, the value proposition is relatively straightforward. Everyone knows what ‘an additional seat’ or ‘an additional license’ is worth, because the output is fairly standard and repeatable.

AI breaks that model.

You can use an AI tool like DeepL to translate a long document from French to English, or you can wire the same product into a workflow where a DeepL-powered agent detects the language of every new support ticket, translates it, drafts a localized reply, and sends it back through your helpdesk.

Same product. Completely different value surface.

In the first case, the ability to translate is the value driver. In the second, translation is just one step in a larger workflow: a response to the ticket in the customer’s language, without any human in the loop.

That’s the mental shift AI forces on customers. The value proposition moves from: ‘how much am I spending for this tool?’ to ‘how much am I able to achieve for $x?’

Once you cross that line, fairness stops being about just the price point and starts being about how well price tracks outcomes.

Zeb Hermann, GM of v0 (by Vercel), explored how the parent company, Vercel, tapped into this sentiment of fairness to deliver more value for their product Fluid Compute and made it stickier:

Recognizing that charging for that ‘idle time’ would be severely uneconomical for the customer, Vercel engineered systems to reuse idle capacity.

When your product evolves from just ‘accelerating’ a workflow to actually ‘producing the output and standing behind its quality,’ the idea of fairness no longer lives in the sticker price. It lives in whether each output is worth its cost.

Even if you get the structure “right” on paper (a fair pricing metric, equitable guardrails, logical tiers, etc.), your first version of AI monetization is still a hypothesis. When use-cases are evolving, and the product behaves non-linearly, customers become your primary price modellers.

When Gamma launched its big AI update in early 2023, the team didn’t begin with a carefully tuned pricing grid. They began with access: all new users got a fixed number of AI credits to experience the product. When those credits ran out, the AI simply stopped working.

- Grant Lee, Co-founder, Gamma, in conversation with Lenny Rachitsky

Throughout the AI economy, one core truth keeps resurfacing: businesses that can drive viral distribution and build defensibility are becoming more valuable than those with great margins but poor distribution.

This manifests in VC-spiel, from top investors in AI:

- Alex Immerman (Partner) and David George, (General Partner, Head of Growth Fund), a16z

This also manifests in how capital is allocated within the AI economy:

Investors are effectively underwriting the belief that if these products become deeply embedded in workflows, pricing power and efficiency will follow — but only if monetization accelerates adoption rather than throttling it.

That’s where pricing comes back into the picture. The teams that do this well treat monetization as a distribution lever: they use pricing to make it effortless to start, safe to scale, and difficult to justify switching away.

Here's how pricing materializes as a distribution/acquisition engine in the real world:

People don’t understand the value until they’re inside the product, experimenting, exploring, and stumbling into their own use cases. The job of pricing is to incentivize this exploration.

Nowhere is this tension more pronounced than within the company that has transformed the application of AI in consumer software: OpenAI.

- Krithika Shankarraman, former VP of Marketing, OpenAI, in conversation with Lenny Rachitsky

In the spirit of encouraging said exploration, AI pricing must attempt to remove as much friction as possible from the first meaningful use.

Obviously, this does not require drastic $0 plans (like with Affinity) to drive customer acquisition, but for an AI monetization strategy to truly be ‘habit-forming’, it needs to check three boxes:

AI products that optimize for adoption inevitably run into the same problem: a small set of power users can drive a disproportionate share of usage and cost; essentially, Jason Lemkin’s ‘adverse selection at scale.’

That’s where guardrails come in: to protect margins and make habits economical to serve. In practice, those guardrails tend to show up in a few patterns:

Yet, aggressive limits risk being perceived as penalties for ‘liking the product too much.’ That’s the opposite of what you want when your monetization strategy is supposed to drive adoption.

Christophe Pasquier, co-founder of Slite and Super, offers a good blueprint for how to avoid that. Their team doesn’t start with arbitrary caps; they start with economics and real usage:

They analyze their cost-to-serve customers (for their best models) and the average usage patterns of consumers, and are able to land on a pricing that is usage-included for 99% of their use-cases.

Two important ideas are baked into that thought:

This one sounds far more intuitive than it actually is. Software pricing has always been a way to rationalize how your product delivers value that feels worth the money; hence, businesses have traditionally relied on market or customer signals to inform their pricing decisions.

But with AI and its running costs, this equation gets murkier. Even for potentially the same task, the cost to serve becomes considerably different depending on a few things:

When your unit costs swing this much, a lot of AI vendors gravitate toward exploratory monetization strategies that are ‘safe’ for them but don’t always feel ‘fair’ to customers.

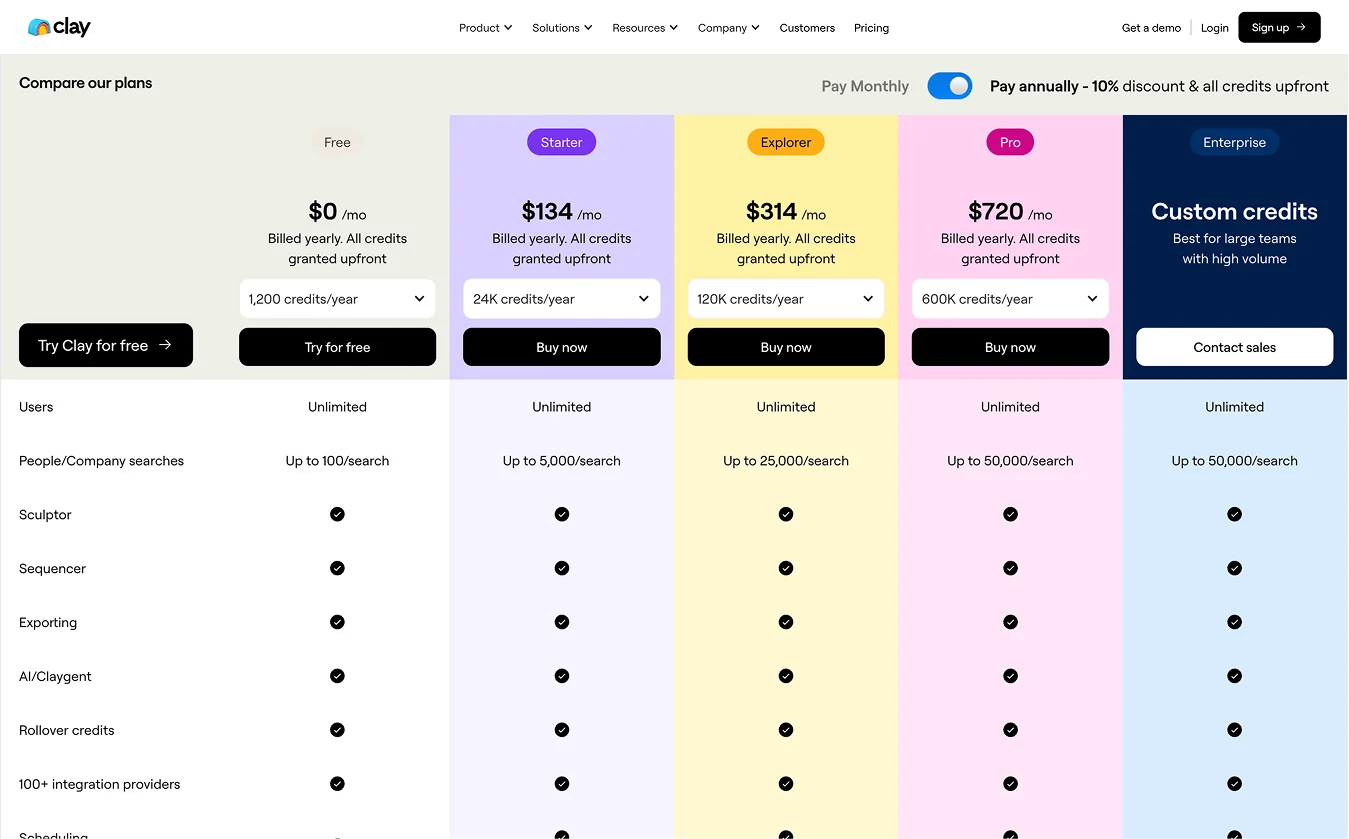

Take credit-based pricing as an example. At its core, credits were supposed to be a single, easy-to-interpret currency through which you could pay for multiple AI capabilities with different underlying costs. In many cases, as for Clay, it encourages micro-journeys through credit purchases that encourage product exploration. For that to happen, however, credit needs to be overlaid on the conventional pattern of how consumers generally behave within your product.

However, when credits are used as a proxy to pass on compute costs to customers, without tracking how customers experience, interact, and find value in the product, confusion may ensue. Customer confusion (like in Replit’s case) generates from having to self-determine how ‘actions’ map to ‘value’, where pricing does not make this relationship clear.

This is why landing on transparent pricing metrics is tougher. It forces you to take accountability for how your product impacts your customers and their business. It forces you to rationalize and simplify swinging costs and complex value functions into one or two strong, credible value anchors that your customers will actually recognize and align with.

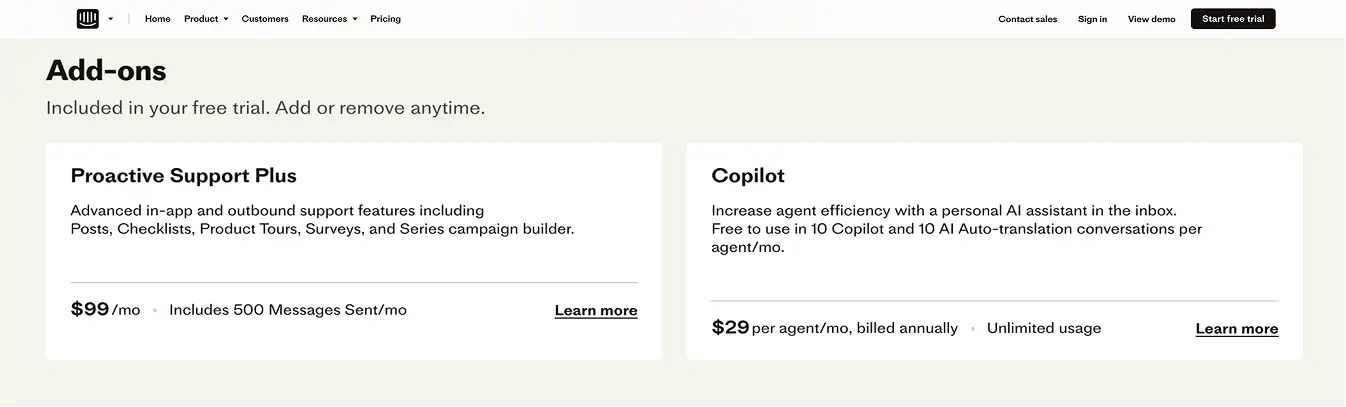

And as Intercom found out, those value signals often sit inside your customer base.

How Customer Feedback Shaped Fin AI’s Outcome-based Pricing Revolution:

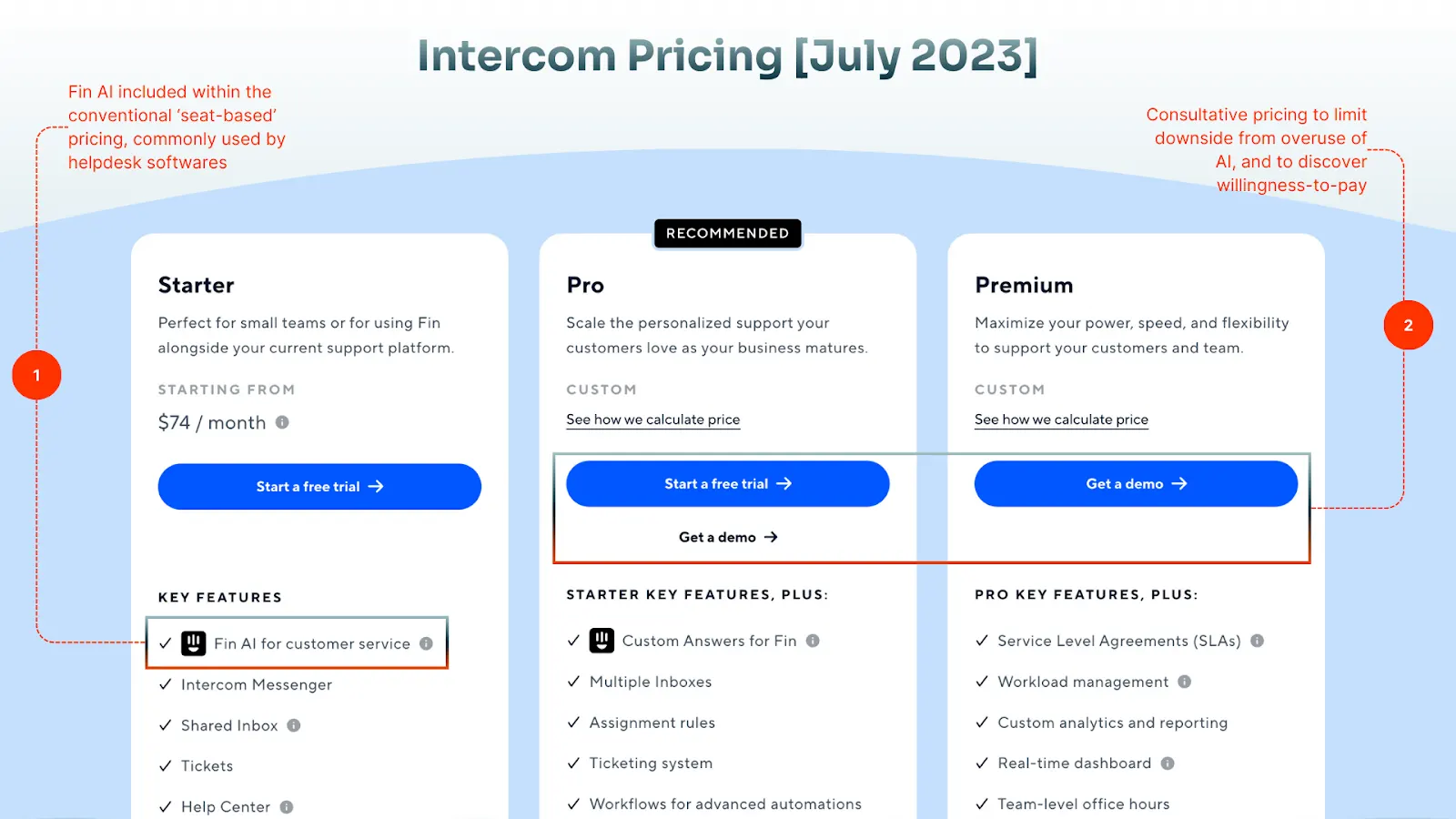

As the first to launch AI agents in customer support, Intercom was operating in a market deeply conditioned to seat-based pricing. Instead of trying to immediately upend the status quo, the company first decided to embed Fin AI within their seat-based plans to drive first-time use and lighthouse customers.

As users and usage exploded, O’Reilly found that including the costs of AI within fixed-value plans became unsustainable.

“When we started to see the power of Fin and just what it could do for customers, we realized that seats weren’t a very viable business [model]. What we were putting into the market was likely going to cannibalize our seat-based revenue.”

But moving the pricing anchor from ‘seats’ to ‘resolutions’ wasn’t as straightforward.

AI agents were a fairly new proposition and completely unprecedented in the customer support industry. Existing pricing models—like seat or usage-based—were still fixated on human users to use and find value from the system, which was vividly inconsistent with the ‘autonomous value creation’ value proposition, Intercom was boldly trying to project.

So they went back to their customers.

Interestingly, it also encouraged the same behaviors that Intercom wanted to see in the use of the Fin AI agent.

For non-committed users, a $0.99 charge to resolve a simple “where’s my order?” question could feel expensive compared to handing it to an existing support rep.

But, for businesses serious about running lean, hyper-efficient teams, reducing repetitive tickets at scale and avoiding thousands of such low-value human conversations is exactly where the value lies. To them, Fin AI is no longer competing for the procurement budget; it is expensed through the budget that teams would otherwise have to allocate for actual human resources.

In practical terms, when your pricing is adeptly aligned with your core ICP, it responds to what they subconsciously treat as the real unit of progress in their business. This usually takes into account one or many of the following considerations:

You can get every intellectual part of pricing right and still be only halfway there. The harder half is brutally operational.

What good is customer feedback if charging per outcome becomes a six-month-long exercise (including Engineering, Product, and RevOps support) and your competitor does it faster? What good is identifying the right guardrail if translating usage to pricing metrics becomes a week-long project?

Every pricing exercise in an AI business has ripple effects across your revenue stack. Change a metric, and suddenly your billing engine, usage meters, revenue recognition schedules, and reporting all need to line up behind it.

Change your price point or where your guardrails sit, and your GTM teams need new talk tracks, new objection handling, and sometimes even new compensation structures.

That’s why for most AI companies, it quickly becomes a consultative practice between Product, Engineering, Finance, Sales, and RevOps. You need:

This means any system that expresses your pricing and controls your revenue processes must work the way these teams work, too. It has to:

That’s the final arc that closes the loop on how AI monetization can consistently attract more customers.

If you’re rethinking how pricing can drive acquisition and adoption for your AI product, or want help pressure-testing a new model…

And if you’ve already stitched together your own approach to AI monetization and have war stories, I’d love to know!

Arijit Bose is a Product Marketing Manager at Chargebee, the leading Revenue Growth Management platform that helps SaaS and AI companies design, launch, and scale flexible pricing models—across subscriptions, usage-based, hybrid, and enterprise-led monetization—while managing billing, invoicing, and revenue recognition end to end.

At Chargebee, Arijit leads key initiatives around usage-based pricing and AI monetization, and is the author of Chargebee’s definitive book on usage-based pricing. With bylines in Reuters and G2, he brings journalistic precision to understanding how pricing and monetization are transforming—and being transformed by—companies building for the AI era.